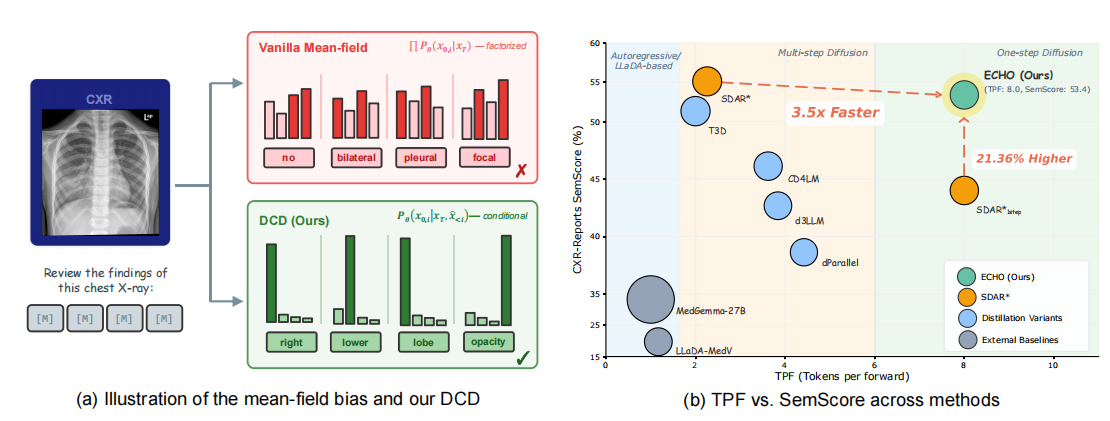

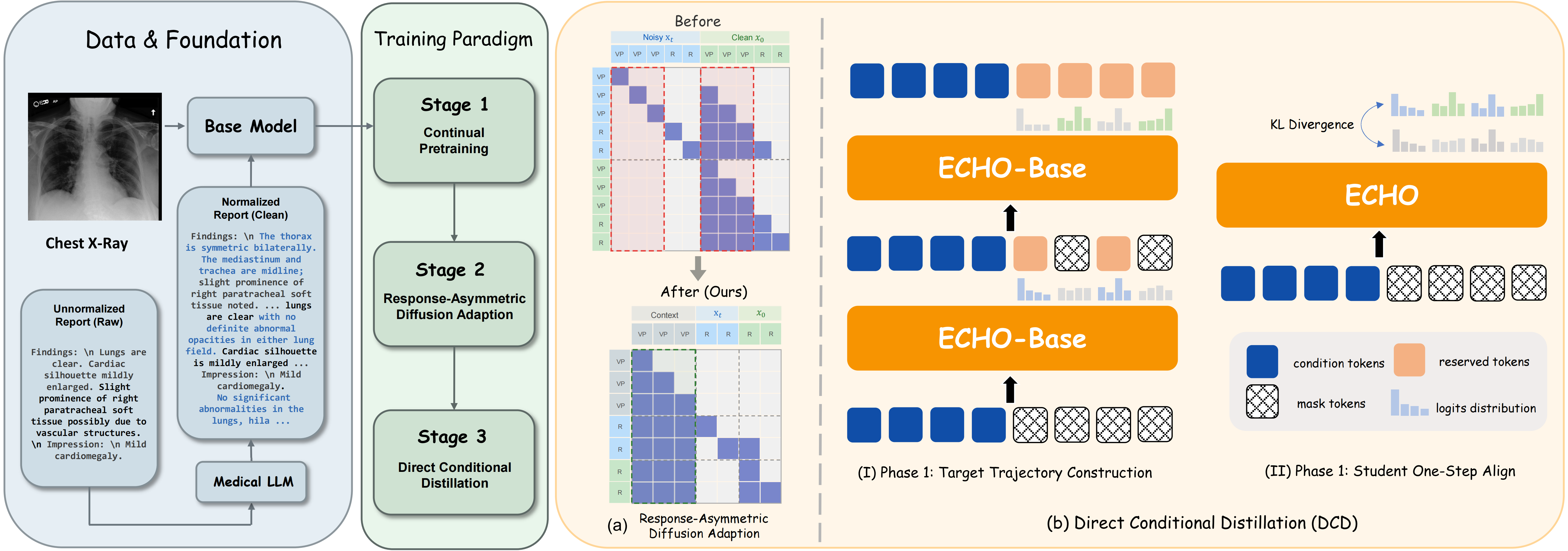

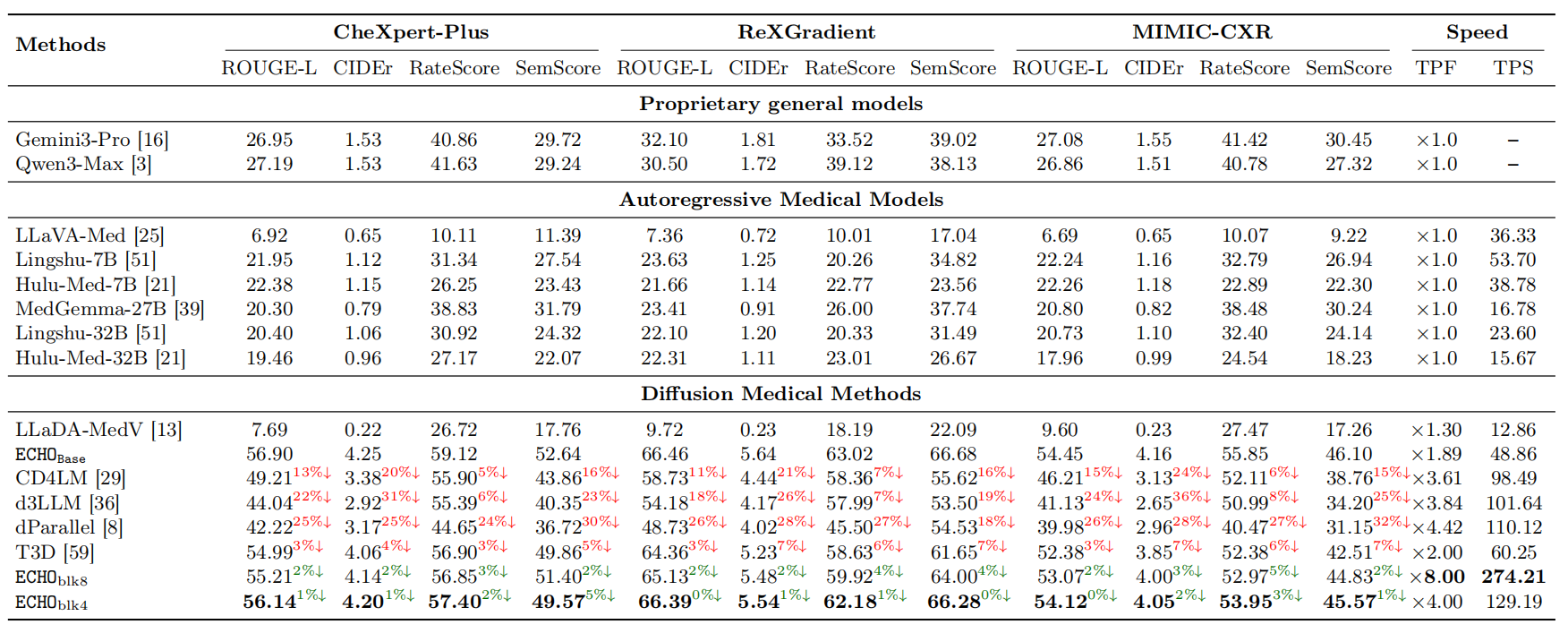

Discrete diffusion language models approximate the joint token distribution through token factorization, treating each position as conditionally independent. This approximation ignores inter-token dependencies, requiring multi-step remasking to progressively recover output coherence. Each additional denoising step, however, incurs an extra model forward pass, increasing inference latency and creating a fundamental quality–speed dilemma. ECHO resolves this through Direct Conditional Distillation (DCD), which constructs non-factorized supervision from the teacher's on-policy multi-step trajectories, enabling the student to capture joint token dependencies in a single forward pass per block — achieving multi-step quality at single-step speed.

ECHO

Efficient Chest X-ray Report Generation

ECHO

Efficient Chest X-ray Report Generation ECHO

ECHO